Seems like right now every podcast is doing an interview centered around artificial intelligence.

But I waited until I found the right story, one that was truly relevant to our audience in the machining world.

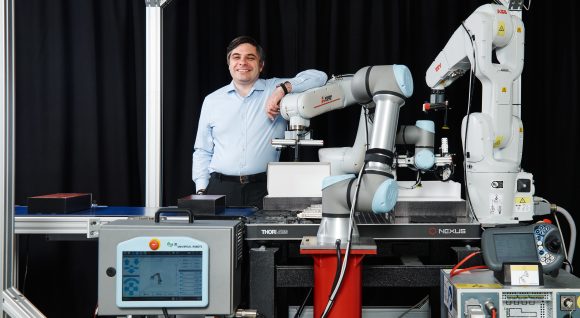

Today’s guest on the podcast, George Konidaris, is the cofounder of the startup, Realtime Robotics. He is also a professor of Computer Science and the director of the Intelligent Robot Lab at Brown University.

Right now, programming a robot arm to perform a repetitive task typically requires a robot integrator to program where every joint of a robot should go. It’s a ridiculous and tedious process.

But with Realtime Robotics’ AI technology, you can instruct a robot to do a task and you don’t have to tell it a zillion steps explaining HOW to do the task.

View the podcast our YouTube Channel.

Follow us on Social and never miss an update!

Facebook: https://lnkd.in/dB_nzFzt

Instagram: https://lnkd.in/dcxjzVyw

Twitter: https://lnkd.in/dDyT-c9h

Interview Highlights

Noah Graff: Explain your company, Realtime Robotics.

George Konidaris: Realtime Robotics is a company that does real-time robot motion planning. We focus on how a robot can automatically generate its own motion. Typically a robot integrator programs every aspect of a robot’s motion in order to accomplish a repetitive task. This means deciding where every joint of a robot arm should go. With our system, you can tell the robot where it needs to put its business end. This is where I would like you to weld, or I would like you to pick up the object over there. We compute the rest of the motion for you.

Graff: How do you control the robots?

Konidaris: The majority of our installations are programmed using a PLC. It used to be that you would have to set every joint on the robot to a specific value.

Now instead, you can send much higher level commands to the PLC.

Graff: So it takes less training than using a typical robot controller?

It takes less training and less effort. We can reduce PLC programs that are often hundreds of statements long to single digit statements in many cases. You get out better efficiency, and we make sure there are no collisions. You don’t have to run what you’ve programmed and eyeball it to make sure it doesn’t collide.

Graff: This can integrate with all different brands?

Konidaris: Yes, we think of robot arms the way most people think of printers, which is that they’re all peripherals. Our job is to provide drivers for those peripherals. To you, they should look just the same because they have similar functionality. You don’t have to go learn the programming language associated with one robot brand. You just plug it in.

Graff: It sounds a little like ChatGPT in that it does a lot of the tedious work for you.

Konidaris: I think the analogy is very apt. One way that I would think about the difference though is that ChatGPT is a top down of intelligence to start with language, which is very high level, and symbolic and abstract.

But what’s interesting about robots and what’s interesting specifically about robots and AI is that is not yet where the challenges are. The challenges are much lower level. Just moving through space, just doing perception, just generating motion.

We’ve automated so much stuff because we’ve had to deal with the fact that robots are so physically stupid.

Graff: It seems like this technology might take away value from cobots a little bit.

Konidaris: One way to think about cobots is they have two distinguishing features. One is that they’re very easy for a person to program by manipulating the robot. The other one is that cobots are safe to have around people.

One way to think about how that’s been done is they’re light and weak and compliant. By “weak” I mean it’s not going to knock your head off if it hits you.

(Cobots) are not as fast, they’re not as precise. In many industries where you really need throughput, you can’t apply a cobot because it just doesn’t have the performance that you need. What we’re hoping to do is to substitute a different technical solution. The robot is not going to hit stuff because it knows how to not hit stuff.

Graff: These robots, even with their intelligence, still require a professional integrator?

Konidaris: (Yes), the integrator is doing a couple things.

They’re designing your work cell for a performance characteristic or a meter specification. That’s a mechanical engineering skill that requires a professional. Also, they’re choosing components like the end of arm toolkit, the particular conveyor belt, and the PLC. They are integrating those into the work cell and writing the logic that controls them.

But then the third thing that (integrators) often have to do is spend a lot of time hand designing the robot motion. In particular, if there are multiple robots in the work cell, they need to try and coordinate the multi-robot motion ahead of time so that nothing ever collides.

And that’s where the real talent comes. We’ve looked at use cases where it takes 13 weeks of engineering just to get the multi-robot coordination right. We can drop it to one (week) because in our case, that last part, you just plug the robots into the same box and they never hit each other.

Graff: Mostly your product is used in automotive plants?

Konidaris: Yes, that’s right. They have severe throughput constraints.

In many cases, the cost of a single robot isn’t anywhere near the cost of extra cycle time, so they’re happy to pay to add extra robots.

I think a typical statistic we saw is adding a single robot only gets you an extra 25% of throughput speed up—as opposed to the 100% theoretical, which no one ever gets. But with our system you can see more like 75%.

So you can get much more of the win using the extra robot because they can pass pretty close to each other and they’re mutually cognizant of that.

Question: How have you used robots in your machine shop? Or, how would you like to use them?

Podcast: Play in new window | Download